A New Theory of Consciousness — Recursive Social Prediction, Mathematical Formalization, and the End of the Hard Problem

Abstract

I. Introduction: The Seductive Error of Raw Qualia

II. The Traditional Framework and Its Hidden Assumptions

III. The Alternative: Meaningful Integration

IV. The Feeling of Consciousness: Social Prediction Redirected at Self

V. Evolutionary, Developmental, and Clinical Evidence

VI. Objections and Replies: Addressing the Social-Predictive Account

VII. What It’s Like to Be a Bat: The Question Reframed

VIII. Philosophical Implications and Objections

IX. Empirical Implications and Predictions

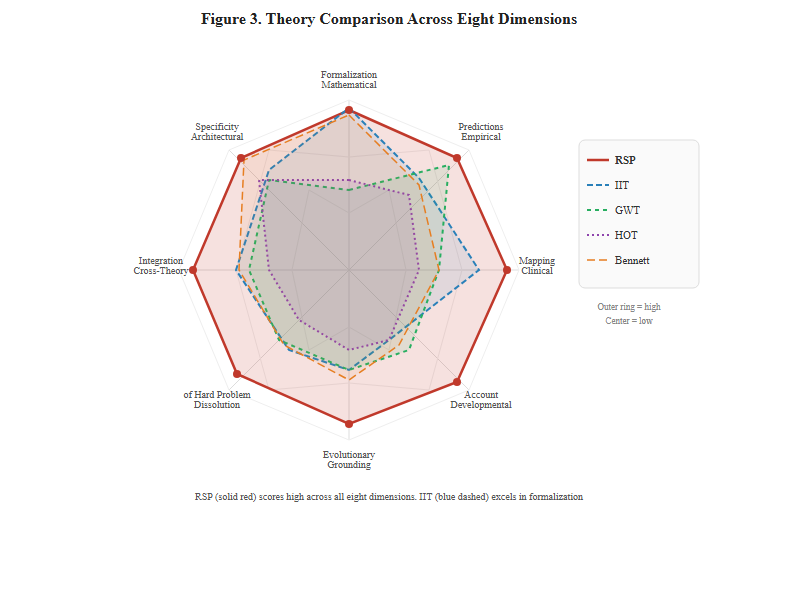

X. Comparison with Alternative Views

XI. Conclusion: Beyond the Bat

Author’s Note & Methodological Note

References

Appendix A: RSP as Hierarchical Predictive Coding

Appendix B: Free Energy Formulation of RSP

Appendix C: Intrinsically Motivated Reinforcement Learning in RSP

Appendix D: Control-Theoretic Dynamics of the Strange Loop

Appendix E: Unified Mathematical Framework

Appendix F: Equation Reference Sheet

Appendix G: Clinical Prediction Matrix

Appendix H: RSP Theory of Intelligence

by Daniel Simon Jr.

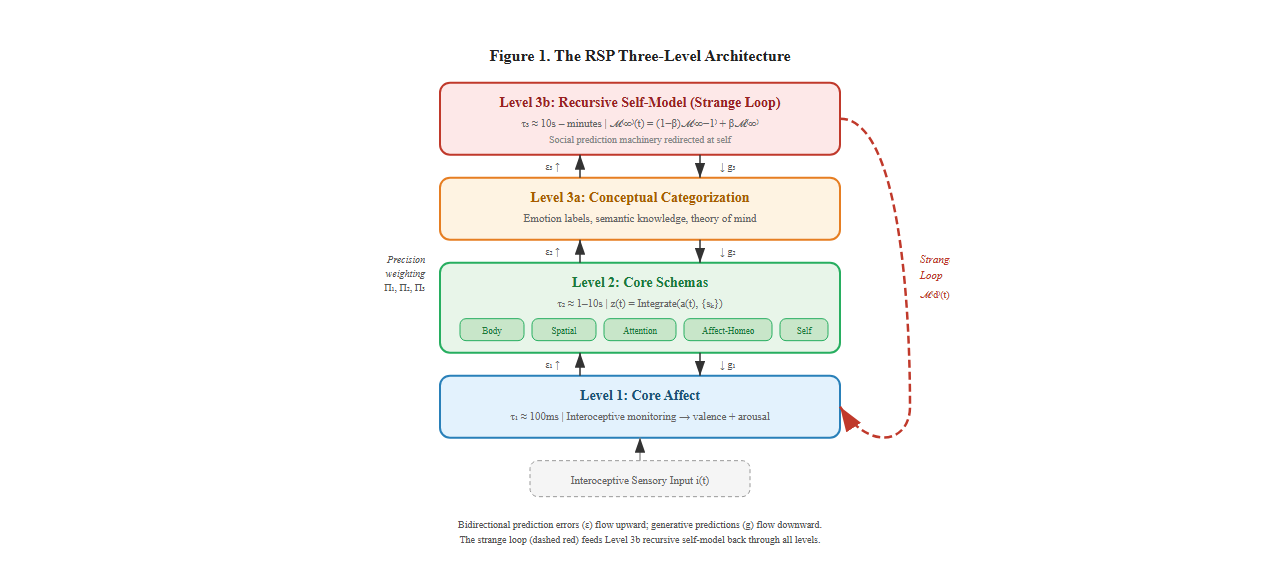

Thomas Nagel’s question "What is it like to be a bat?" has shaped consciousness studies for fifty years, yet the question rests on a flawed assumption: that phenomenal experience consists of "raw qualia," pre-conceptual, meaning-independent subjective states. This paper argues that the assumption creates pseudoproblems that dissolve once we recognize conscious experience is constituted by meaningful integration from its inception. I propose the Recursive Social Prediction (RSP) model, a three-level architecture in which phenomenal consciousness arises from the brain’s integration of biological core affect with learned conceptual categories through recursive self-modeling. Level 1 (core affect) provides valence and arousal via interoceptive monitoring. Level 2 (core schemas) organizes experience through five foundational schemas: body, spatial, attentional, affective-homeostatic, and self. Level 3 subdivides into conceptual categorization (3a) and the recursive self-modeling "strange loop" (3b), where social prediction machinery is redirected at the self, constituting phenomenal consciousness. The paper defends a strong dissolution of the hard problem: phenomenology IS recursive self-modeling, not a separate property that could be absent from it. The framework is formalized across eight appendixes, including five core mathematical formalizations (hierarchical predictive coding, variational free energy, intrinsically motivated reinforcement learning, cascade feedback control, and a unified master functional with algorithmic specification), an equation reference sheet, a clinical prediction matrix, and a formal theory of intelligence, with proofs of cross-formalism equivalence showing the four descriptions derive from a single variational functional. Evolutionary, developmental, and clinical evidence supports the architecture, including a mapping of clinical dissociations onto specific architectural failures. The paper generates seven falsifiable predictions, addresses ten philosophical objections (including Jackson’s knowledge argument and Descartes’ Cogito), and compares RSP against nineteen competing theories of consciousness across ten families. The bat question should not be "What is it like?" but "What meaningful integrations does a bat’s architecture perform, and how do they differ from ours?"

When Thomas Nagel (1974) asked "What is it like to be a bat?", he articulated an intuition that feels undeniable: there exists something it is like to have an experience, a subjective, qualitative character that seems irreducible to physical processes. This "something it is like" appears to be prior to any conceptualization, any linguistic description, any cognitive processing. It seems to be raw.

The intuition has proven remarkably powerful. It grounds the "hard problem" of consciousness (Chalmers, 1995), motivates arguments for property dualism, and makes consciousness appear uniquely resistant to scientific explanation. Accept that phenomenal experience has this raw, pre-conceptual character, and we face what appears to be an unbridgeable explanatory gap between physical processes and subjective experience.

But what if the intuition, however persuasive, is simply wrong? What if the very notion of "raw qualia" commits a category error, positing an entity (pure, meaning-independent experience) that cannot exist because experience is necessarily constituted by meaning?

This paper develops three interconnected claims:

First, the assumption that phenomenal experiences are "raw qualia" (pure, pre-conceptual phenomenal qualities existing independently of categorization or meaning) rests on a conceptual confusion. Experience cannot be separated from meaning any more than a word can be separated from language or a move from the game of chess.

Second, conscious experiences are better understood as meaningful integrations: the emergent products of the brain’s integration of biological core affect (valence and arousal) with culturally learned conceptual categories. The phenomenology does not exist at either level independently. It emerges from their synthesis.

Third, this reconceptualization dissolves rather than solves certain philosophical puzzles. Questions like "Do we all see the same red?" or "What is it like to be a bat?" assume something (raw, meaning-independent experience) that doesn’t exist. The questions need reframing, not answering.

This is not mere conceptual housekeeping. The raw qualia assumption has consequences:

If the argument here succeeds, the result is not just conceptual clarity but new empirical research programs and productive connections across previously isolated domains.

Previous attempts to dissolve the hard problem — from Dennett’s (1991) quining of qualia to Frankish’s (2016) illusionism — have rejected the explanatory gap but without providing a positive architecture that specifies what consciousness is rather than what it is not. This paper goes further: beyond proposing an architecture, it argues that the three-level structure represents the necessary conditions of possibility for any consciousness whatsoever, drawing on convergent evidence from phenomenology (Husserl, Merleau-Ponty), existentialism (Sartre), philosophy of biology (Jonas, Thompson), and analytic philosophy (Strawson, McDowell). The result is a theory that is simultaneously empirically grounded, mathematically formalized, and philosophically defended as structurally necessary — a combination not previously achieved in consciousness studies.

The RSP architecture is formalized mathematically in five companion appendixes: as a hierarchical predictive coding network (Appendix A), a variational free energy functional (Appendix B), an intrinsically motivated model-based reinforcement learning system (Appendix C), and a multi-loop feedback control system (Appendix D). These formalizations show that the three levels, the strange loop, and the developmental construction sequence are not metaphors but consequences of well-defined dynamical systems with provable convergence properties.

Let us examine Nagel’s (1974) question carefully. He asks us to consider what it is like to be a bat, emphasizing that bats experience the world through echolocation, a sensory modality humans lack. His central claim: "an organism has conscious mental states if and only if there is something that it is like to be that organism—something it is like for the organism" (p. 436).

This formulation appears innocent. It smuggles in crucial assumptions:

Assumption 1: Experience-as-substance. The phrase "something it is like" treats experience as a kind of substance or property that can exist independently of any relational or functional context. It implies experience has intrinsic phenomenal character that could, in principle, be identical or different across individuals.

Assumption 2: Pre-conceptual phenomenology. Nagel’s thought experiment asks us to imagine directly accessing bat experience, suggesting that phenomenology exists prior to and independently of conceptual frameworks. The experience is presumed to have a "what-it’s-like" character not constituted by categorization or meaning-making.

Assumption 3: Phenomenal comparability. The question "Do we all see the same red?" (a variant of Nagel’s concern) assumes there exists a determinate fact about phenomenal similarity that, if only we could access it, would answer whether your red-experience matches mine. This presumes experiences are the sorts of things that can be identical or different in the relevant sense.

Assumption 4: Privacy of qualia. If each individual has their own raw experiences inaccessible to others, then consciousness is fundamentally private. Communication about experience becomes problematic. How can we know we mean the same thing by "red"?

These assumptions have deep roots. Locke’s (1689/1975) discussion of primary versus secondary qualities distinguished between objective properties (shape, motion) and subjective experiences (color, taste). Hume (1739/1978) treated impressions as mental atoms. The sense-data theorists of the early 20th century went further, explicitly positing raw sense-data as the foundation of perceptual knowledge.

Contemporary philosophy retains this framework. Chalmers’ (1996) "hard problem" asks why physical processes are accompanied by experience, why there is "something it is like," treating phenomenal character as an explanandum distinct from functional or cognitive processes. Block’s (1995) distinction between phenomenal consciousness (raw experience) and access consciousness (functionally available experience) presupposes that phenomenology can be separated from its functional role.

Why has this framework been so persistent? Because introspection seems to reveal raw experiences. When you look at something red, you seem to access a pure phenomenal quality, a redness, that exists prior to your conceptualizing it as red, associating it with anything, or using it for any function.

But introspection is not transparent access to the structure of experience. It is itself a cognitive process that can mislead us about its own workings. The seeming givenness of raw experience may be an artifact of how consciousness presents itself, not an accurate reflection of how it is constituted.

The raw qualia assumption makes consciousness appear inexplicable while simultaneously preventing us from asking better questions. Accept that experiences are raw, meaning-independent phenomena, and we must face the explanatory gap: how could physical processes ever produce these mysterious, intrinsic, private qualia? But if experience is not constituted by raw qualia, then different questions become relevant. Empirical questions about meaningful integration.

To build an alternative account, I begin with something genuinely pre-conceptual: core affect (Russell, 2003; Barrett & Bliss-Moreau, 2009). Core affect refers to neurophysiological states characterized by two dimensions: valence (pleasant/unpleasant) and arousal (activated/calm). These states arise from interoceptive monitoring of bodily conditions and subcortical processing.

Crucially, core affect is:

Core affect is not yet experience in the rich sense we care about. An infant experiencing unpleasant high-arousal core affect does not yet feel "angry" or "scared." The infant feels something, but that something is global and undifferentiated. Core affect provides the raw material for feelings. It is not itself what we typically mean by conscious emotional experience.

Before examining high-level conceptual categorization, it is essential to recognize an intermediate structural layer: core schemas, pre-reflective and continuously operating internal models that provide the foundational architecture for all conscious experience (Gallagher, 2005; Metzinger, 2003; Graziano, 2013).

Research converges on five fundamental schema types that constitute the basic structure of consciousness:

Body schema: Pre-reflective sensorimotor capacities that create an implicit spatial-proprioceptive framework. This operates continuously below awareness, structuring how you experience your body’s location, posture, and capabilities. When you reach for a coffee cup, you don’t consciously calculate limb positions; the body schema handles this transparently. This provides the most primitive conscious foundation: the sense of being embodied.

Self-schema: The phenomenal self-model creating the unified sense of being a coherent entity persisting through time. This includes three essential properties: mineness (this experience is mine), perspectivalness (I am the immovable center of experience), and continuity (I am the same "I" across time). The self-schema integrates multiple component processes into the unified first-person perspective.

Attention schema: The brain’s simplified model of its own attention process (Graziano, 2013). This schema evolved for social cognition, specifically for modeling where others direct attention, and consciousness emerges when the same machinery turns inward to model your own attention. The brain regions computing "Person X is aware of Y" also compute "I am aware of Y." This makes consciousness fundamentally a social-perceptual construct.

Spatial schema: Organizing frameworks that structure not just literal space but abstract cognition. Hippocampal-parietal systems provide the scaffold for organizing memory, conceptual knowledge, temporal relations, and even social understanding. Your mental models are inherently spatial: memories are "places" you visit, time flows "forward," social hierarchies are "above" or "below."

Affective-homeostatic schema: The continuous monitoring of bodily states that generates primordial feelings. Damasio (1999) calls this the "proto-self." It differs from discrete emotions: the elementary feeling of existence, the background sense of being alive, the continuous affective tone arising from interoceptive awareness of hunger, thirst, pain, pleasure, fatigue, vitality.

These schemas share crucial properties: they operate pre-reflectively (below conscious awareness), they exhibit transparency (experienced as reality rather than as representations), they perform control functions, and they provide organizing structure for higher-level processing.

The schemas are not learned concepts but developmental achievements. Infants don’t learn to have a body schema; it develops through sensorimotor experience. The self-schema begins emerging around 18-24 months when children recognize themselves in mirrors (Amsterdam, 1972), indicating a developing sense of self as a distinct entity. Understanding that others see them differently (visual perspective-taking) develops later, closer to 4-5 years alongside theory of mind. These are proto-concepts: structural organizations that enable conceptual thought rather than being products of it.

Building on these foundational schemas, humans acquire through social learning high-level conceptual categories, culturally specific concepts like "anger," "anxiety," "schadenfreude," "amae," that organize and give meaning to core affective states (Barrett, 2017).

This is not mere labeling of pre-existing feelings. The concept application constitutes the specific feeling. When your brain categorizes current core affect (unpleasant, high-arousal) as an instance of "anger" (given situational context suggesting provocation), the experience of feeling angry emerges. The phenomenology of anger—its particular quality, its action tendencies, its social meanings—is constituted by this categorization process.

But crucially, this conceptual categorization operates through the core schemas. The concept "anger" gets integrated with:

The feeling of anger is not just the concept "anger" applied to core affect. It is the concept integrated through all these schemas simultaneously.

Evidence for this constitutive role of concepts includes:

Cross-cultural variation: Emotions don’t translate cleanly across languages because different cultures have genuinely different emotion concepts that create different phenomenologies. German Schadenfreude and Japanese amae don’t map to English emotions because the concepts create experiential possibilities English lacks (Russell, 1991; Wierzbicka, 1999).

Emotional granularity: People with more differentiated emotion concepts have genuinely different emotional experiences, not just better descriptions of identical experiences (Barrett et al., 2001; Kashdan et al., 2015). Someone who distinguishes "anxious," "worried," "tense," and "apprehensive" has four distinct feelings where someone with coarser concepts has variations of "feeling bad."

Developmental emergence: Children don’t gradually access pre-existing emotions; they acquire differentiated emotional experiences as they learn emotion concepts (Widen & Russell, 2008). A toddler’s emotional life is simpler not because they can’t describe complex emotions but because they haven’t yet constructed them.

Modern predictive processing frameworks (Clark, 2013, 2016; Friston, 2010; Seth, 2013) add a crucial mechanistic insight: the brain is fundamentally a prediction machine. Perception is not passive reception of sensory input but active prediction of input’s causes.

When you see something red, your brain doesn’t receive raw red-data that it then categorizes. Rather, your brain predicts "This is RED" based on prior learning, and this prediction:

The experience of seeing red IS this integrated prediction. There is no stage at which "raw redness" exists independently of these predictions and integrations. The feeling of red, what-it’s-like to see red, is the brain’s multi-layered prediction integrating sensory evidence with conceptual knowledge, emotional associations, and contextual expectations.

Bringing these elements together: conscious feelings are meaningful integrations of biological core affect with foundational schemas and culturally learned conceptual categories, situated in predictive models that connect perception, emotion, memory, and action.

Notice the crucial three-level architecture:

Level 1: Core Affect (biological foundation)

Level 2: Core Schemas (proto-conceptual structure)

Level 3: Recursive Social Prediction (explicit meaning + self-modeling)

The feeling doesn’t exist at any single level:

The feeling emerges from their integration. When all these processes (interoceptive signaling, core affect, schema activation, conceptual categorization, predictive modeling, contextual embedding) operate in concert, the result is phenomenal consciousness: experience with a particular qualitative character.

This layered view resolves several puzzles:

Animal consciousness without language: Animals have core affect and core schemas but lack linguistic concepts. They have genuine structured experience, not "raw feels" but schema-organized affects. A dog experiences fear (unpleasant high-arousal organized through body schema, attention schema, and rudimentary self-schema) even without the concept "fear."

Infant consciousness development: Infants progress from core affect with rudimentary schemas (birth) through increasingly schema-structured experience (0-18 months) to conceptually elaborated feelings (18+ months). Each stage is genuinely conscious but with increasing sophistication.

Cross-cultural variation with biological universals: All humans share core affect and develop similar core schemas (with variation based on sensorimotor experience), but cultural concepts vary dramatically. This explains both universality (shared affects and schemas) and diversity (different conceptual elaborations).

The role of social cognition: The attention schema—one of the core foundational schemas—is inherently social. Graziano’s (2013) attention schema theory proposes that consciousness IS the brain’s model of attention, and this model evolved for social purposes. The same neural machinery (temporoparietal junction, superior temporal sulcus) that computes "Person X is aware of Y" also computes "I am aware of Y." Consciousness is fundamentally social-perceptual from its schematic foundation, not just in its conceptual elaboration.

Proto-concepts as bridge: The schemas function as proto-concepts, not linguistic or fully explicit, but structured patterns of organization that enable later concept learning. When an infant develops the attention schema, they’re not yet thinking "I am aware," but they have the structural foundation that will later support that concept. The schemas provide the architecture into which linguistic concepts will fit.

A crucial clarification: this account does not reduce consciousness to underlying processes while making phenomenology causally inert. Meaningful integration itself has causal efficacy at its own level of description.

Consider software running on hardware. The software’s causal powers are real. Microsoft Word causes documents to be created, even though the software is implemented in hardware. The causal story at the software level (algorithms, data structures, program flow) is explanatory and cannot be eliminated in favor of hardware-level descriptions without losing real causal structure.

Similarly, conscious feelings have genuine causal powers. Your experience of fear causes you to flee, not merely the underlying neural activity but the integrated meaningful state (fear) that those neurons implement. The phenomenology shapes subsequent predictions, attention, learning, and action. The feeling is not an inert by-product but an active component of the mind’s predictive architecture.

Addressing the causal exclusion problem: Kim (1998, 2005) argues that if physical causation is complete (every physical event has a sufficient physical cause), then mental causation is either identical to physical causation or epiphenomenal. The RSP model responds that mental and physical descriptions are not two competing causal stories but two levels of description of the same causal process, what Dennett calls "real patterns." The three-level RSP architecture describes organizational properties of neural activity (prediction error flows, precision weighting, recursive self-modeling) that are multiply realizable and causally explanatory at their own level. The causal work is done by the pattern of neural activity, not by neural activity as such. Removing the pattern (disrupting the three-level architecture) disrupts both function and phenomenology, as clinical dissociation evidence confirms (§IX, Prediction 6). The RSP model thus commits to a specific form of non-reductive physicalism: consciousness is constituted by physical processes organized in a specific way, and the organizational description is causally indispensable.

The three-level architecture described above invites a stronger claim than has yet been made explicit. The RSP levels are not merely one way consciousness happens to be organized in mammals. They represent the conditions of possibility for the kind of consciousness we can coherently describe and investigate.

The parallel with Kant (1781/1998) is instructive. This transcendental approach has currency in both the continental and analytic traditions. Strawson’s The Bounds of Sense (1966) defended the necessary structure of experience from within analytic philosophy, while McDowell’s Mind and World (1994) argued that experience must be conceptually structured to be rationally evaluable — a claim that resonates with RSP’s insistence on Level 3a conceptual categorization as a condition of fully articulate consciousness. Kant argued that space, time, and causality are not features of a world we happen to encounter but preconditions for any experience of a world at all. Without spatial ordering, temporal succession, and causal connection, there is no coherent perception — only noise. The transcendental argument does not proceed by surveying all possible minds and checking whether each one uses space and time. It proceeds by asking what must be in place for there to be a subject of experience at all. The RSP architecture admits the same style of argument, applied not to the forms of intuition but to the organizational prerequisites of subjectivity.

Level 1 is necessary because mattering is the ground of subjectivity. For there to be consciousness at all, experience must matter to the experiencing system. A system for which nothing is better or worse (for which no state carries valence) has no stake in its own processing. It has no vantage point from which experience could be for anything. This is not a biological accident but a definitional requirement: valence is the minimum condition for a subject position. Jonas (1966) makes an analogous point about metabolism — the organism’s needful relation to its environment is not an incidental feature of life but the condition under which anything like concern, and therefore anything like a point of view, first becomes possible. Thompson (2007) extends this insight through the concept of autopoiesis: a self-maintaining system that actively distinguishes itself from its environment is the most primitive structure in which "mattering" can take root. Without Level 1, there is no subject for whom subsequent levels could be organized.

Level 2 is necessary because unstructured mattering is not yet experience. Even granting valenced concern, consciousness without organizational structure is, in James’s phrase, a "blooming, buzzing confusion." For experience to be of something — for it to have content and not merely tone — it must be organized. The five schemas are not one possible vocabulary among many. They are the minimum structural conditions identified by this analysis, though I cannot rule out that radically different architectures might achieve analogous functions through different organizational primitives. One cannot have experience without a body through which it is received (body schema), a location from which it is had (spatial schema), a sense of what matters more and less urgently (affective-homeostatic schema), selective engagement with some features rather than others (attention schema), and a subject who persists through it (self schema). These are not features of experience but prerequisites for it. Merleau-Ponty (1945/2012) arrives at a similar conclusion from the phenomenological side: the body is not an object in the experiential field but the condition under which there is an experiential field at all. Husserl (1913/1983) makes the parallel point for intentional structure: consciousness is always consciousness of something, and this directedness requires organizational form that precedes any particular content.

Level 3b is necessary because awareness requires self-modeling. For there to be not just processing but awareness of processing (not just states but a subject who has states), the system must model itself as the locus of its experiences. And that modeling must be recursive, because the model is itself one of the system’s states, and a model that failed to represent its own contribution to the system’s state would be incomplete in a way that undermines the very subjectivity it constitutes. The "I" is not an entity that exists independently and then becomes conscious; the "I" is constituted by the recursive loop. Sartre (1943/1956) identified this structure as the pour-soi: consciousness is always already self-aware, not through a second act of reflection but through the self-referential structure of awareness itself. Fichte’s (1794) Tathandlung — the self-positing act in which the "I" constitutes itself by recognizing itself — captures the same recursion from the idealist tradition.

Just as Kant’s categories have been contested — with alternative transcendental frameworks proposed by neo-Kantians and pragmatists — the specific five-schema instantiation of Level 2 may represent the mammalian solution to a structural problem that admits other solutions. What the transcendental argument establishes is the ordering constraint (mattering before structure before recursion), not the uniqueness of the specific implementation.

The mathematical formalisms of the appendixes already encode this necessity without having named it as such. The free energy formulation makes the dependency explicit: Level 2 prediction error is defined as , which contains Level 1 expectations as a term. If

is undefined,

is undefined — Level 2 cannot operate without Level 1 output. The cascade constraint

specifies that inner loops must stabilize before outer loops can operate, not as an engineering preference but as a logical dependency: a control system cannot correct for errors at a timescale it has not yet resolved. The reinforcement learning formulation requires reward signals (valence, Level 1) before state representations (schemas, Level 2) can be learned, because there is nothing for the learning process to improve upon without a criterion of better and worse. No alternative ordering is coherent. The construction order is not contingent; it is entailed by the structure of the problem.

The strongest version of the claim is conditional: given the RSP framework’s assumptions, the three-level ordering is logically required. The weaker but still significant version is that convergent evidence from four independent mathematical formalisms, six philosophical traditions, and the developmental and evolutionary record all point to the same architectural structure — which is powerful evidence even if it falls short of strict transcendental necessity.

But the argument must go further. This section has explained what feelings are (meaningful integrations of core affect through schemas with concepts) and sketched the three-level architecture. I have not yet explained the subjective character—the feeling of feeling. Why should meaningful integration feel like anything at all? The next section argues that the missing piece is Level 3b: recursive social prediction redirected at the self. The "strange loop" of self-modeling, predicting your own future states by modeling yourself as others model you, is what transforms meaningful integration into phenomenal consciousness. I support this claim through evolutionary, developmental, computational, and clinical evidence.

I have established that conscious experiences are meaningful integrations: biological core affect organized through foundational schemas and elaborated through cultural concepts. But this still leaves a puzzle. Why should these integrations be accompanied by phenomenology? Why is there "something it is like" to undergo meaningful integration?

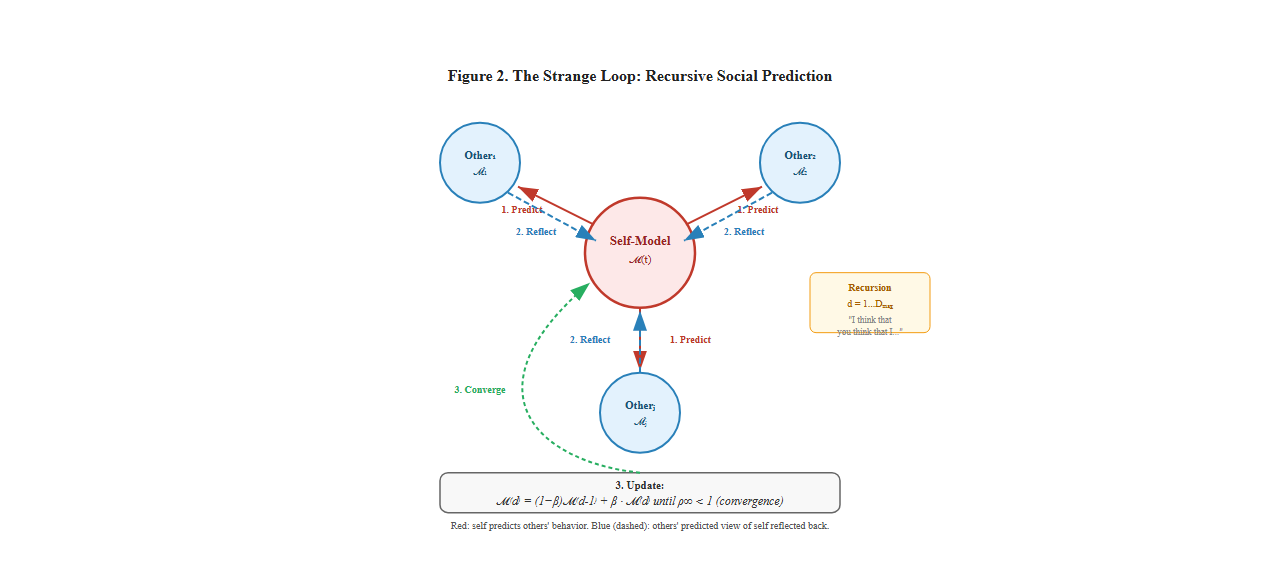

The answer, I propose, is that the feeling of consciousness is the recursive process of social prediction applied to oneself. Consciousness feels like something because the brain models itself as a social entity whose future states need to be predicted for effective social navigation.

This is not a separate feature added to meaningful integration. It IS what meaningful integration becomes when the schemas include self-modeling and when that self-model is used predictively in social contexts.

Hofstadter (1979) identified consciousness as involving a "strange loop," a recursive, self-referential structure where consciousness observes itself observing itself in endless recursion. But Hofstadter left the functional purpose of this recursion somewhat mysterious. Why should consciousness loop back on itself?

The answer: the strange loop is consciousness creating a predictive model of itself for future thoughts. The recursive quality isn’t consciousness admiring itself in a mirror. It is consciousness generating a forward model of itself that can be run into the future to predict what it will think, feel, and do next.

Your brain is fundamentally a prediction machine (Friston, 2010; Clark, 2016). It constantly generates predictions about sensory inputs, motor consequences, environmental changes, and (crucially) its own future states. To predict your own future states effectively, your brain needs a model of itself: its current state, its dynamics, its tendencies, its patterns.

The recursive quality of consciousness, the way you can observe yourself thinking, is not philosophical curiosity but functional necessity. Consciousness creates and continuously updates a self-model that enables prediction of future mental states.

Consider deciding what to say in a conversation. You don’t speak randomly. You:

This requires running simulations of your own future mental states. You need a model of yourself to predict "if I say this, I’ll feel embarrassed" or "if I say this, I’ll feel satisfied." The strange loop, the recursive self-observation, is consciousness creating and maintaining this predictive self-model.

What happens when self-modeling breaks down:

These clinical cases show that predictive self-modeling is not abstract philosophy. It is a concrete cognitive function, and when impaired, consciousness changes in specific measurable ways.

But here’s the crucial insight: you model yourself primarily in relation to others. The self-model is not a solipsistic internal representation; it is inherently relational, structured around social contexts and social predictions.

Consider your conscious experience right now. You’re not experiencing abstract thoughts in a vacuum. You’re experiencing yourself as someone reading something written by someone else, trying to understand ideas, forming potential responses, perhaps agreeing or disagreeing. Your consciousness is structured around relationships—a model of yourself in relation to me (the author), in relation to these ideas, in relation to potential future conversations about these ideas.

This relational quality is not peripheral. It is central. Consider how much of conscious experience involves modeling others:

Even in solitude, thinking remains implicitly relational:

Consciousness is continuously modeling self in relation to others, even when those others are your future self, imagined others, or internalized social norms.

Humans are intensely social creatures. Our evolutionary success depended on navigating complex social environments: cooperating with some, competing with others, forming alliances, detecting deception, coordinating group activities. Effective social navigation requires sophisticated models not just of others but of ourselves as seen by others.

The crucial evolutionary insight: To predict how others will respond to you, you must predict what they think of you. To predict what they think of you, you must model yourself as they see you. This requires consciousness—a self-model that can be viewed from others’ perspectives.

The neural machinery that evolved for theory of mind (modeling others’ mental states) was recruited for self-modeling. Graziano’s (2013) Attention Schema Theory makes this explicit: consciousness emerges when the brain’s mechanism for modeling others’ attention gets applied to modeling its own attention. The same neural regions (temporoparietal junction, superior temporal sulcus) that compute "Person X is aware of Y" also compute "I am aware of Y."

This is why consciousness is:

Now it becomes possible to answer the original question: Why should meaningful integration feel like anything?

Because the feeling IS the recursive social prediction process itself, experienced from within.

When your brain:

…this integrated process doesn’t merely produce a feeling as a separate by-product. The integration, prediction, and recursion CONSTITUTE the feeling. Phenomenology is not something added to the process; it is what the process is like from inside the system.

Think about it this way. Visual experience isn’t something added to visual processing—it’s what visual processing is like from inside the visual system. Musical experience isn’t something layered onto auditory pattern-processing; it is what that processing is like from inside. Conscious feeling, similarly, isn’t something added to recursive self-modeling. It is what recursive self-modeling in social contexts IS from inside.

This explains why consciousness is continuous and self-sustaining. The predictive loop maintains itself:

Moment t: Your current state (thoughts, feelings, sensations) generates predictions about moment t+1. Moment t+1: Those predictions shape what actually happens (attention, interpretation, response). The prediction error between predicted and actual state updates the self-model. Updated model generates new predictions for moment t+2. The cycle continues, creating the continuous stream of consciousness.

This is not a homunculus watching an internal screen. It’s a self-organizing predictive system where each moment generates the next through prediction, with the self-model continuously updated based on prediction errors. The loop sustains itself through neural energy, creating what William James called the "stream" of consciousness.

The social context is crucial: these predictions are not just about what you’ll think but about how your thoughts will affect others and how others’ responses will affect you. You’re constantly running social simulations: "If I think X, I might say Y, they might respond Z, I would then feel W…" These simulations require recursive self-modeling in social space.

Return now to the explanatory gap. Why should physical processes create subjective experience?

Traditional framing (wrong): Physical processes (neurons firing) somehow produce separate phenomenal properties (raw qualia). Gap appears unbridgeable.

Meaningful integration framing (better): Physical processes implement meaningful integration of affect, schemas, and concepts. But why should integration feel like anything?

Social-predictive framing (complete): Physical processes implement recursive self-modeling for social prediction. This recursion, experienced from within the system executing it, constitutes phenomenology. There is nothing separate to explain; the feeling IS the recursive modeling process.

The gap dissolves because:

Functional role is clear: Recursive self-modeling enables social prediction, which is evolutionarily crucial for social species. Consciousness has a clear adaptive purpose.

Mechanism is specifiable: The neural structures can be identified (TPJ, mPFC, posterior cingulate), the schemas they implement (attention schema, self-schema), and the recursive loops they create.

Development is tractable: One can track how recursive self-modeling emerges through social interaction, creating increasingly sophisticated consciousness.

Phylogenetic gradation: Consciousness isn’t all-or-nothing but scales with social complexity and recursive modeling capacity.

Impairment is predictable: Damage to self-modeling regions produces specific consciousness deficits, as clinical neuroscience confirms.

The question that traditionally seemed unanswerable—why recursive self-modeling should be accompanied by phenomenology rather than occurring "in the dark"—dissolves once it is recognized as malformed. It presupposes that phenomenology is a separate property that could be present or absent while leaving the functional architecture intact. But under the RSP account, phenomenology is not a separate property: it IS what accessible recursive self-modeling is, experienced from the perspective of the system performing it. Asking "why does recursive self-modeling feel like something?" is like asking "why does rotation rotate?" The question mistakes a constitutive relationship for a causal one. The gap is not narrowed; it is closed, because the gap depended on treating phenomenology as ontologically separate from the process that constitutes it.

The transcendental argument developed in §III reinforces this dissolution. If valence (Level 1), structured organization (Level 2), and recursive self-modeling (Level 3b) are not contingent features of consciousness but its conditions of possibility — if no alternative architecture could sustain a subject position — then the demand for an explanation of why this architecture produces experience rests on a false premise. The demand assumes that the architecture could exist without the experience and asks what bridges the two. But if the architecture constitutively is the experience, there is no gap to bridge. The transcendental necessity of the three-level ordering — each level logically presupposing the one below it — means that consciousness is not something the architecture generates as an additional output. It is what the architecture is.

Consider an analogy. Why does a whirlpool have a center? Not because something extra is added to rotating water; the center is intrinsic to the rotational structure. Remove the center, and you no longer have a whirlpool.

Similarly: Why does recursive self-modeling have phenomenology? Not because something extra is added to the modeling. The phenomenology is intrinsic to the recursive structure experienced from within. Remove the phenomenology, and you no longer have consciousness. You have unconscious self-monitoring.

The "feeling" of consciousness is the subjective character that emerges when:

This is why purely philosophical zombies (beings physically identical to us but lacking phenomenology) are incoherent. The phenomenology isn’t something that could be absent while leaving recursive self-modeling intact. The phenomenology IS what recursive self-modeling is like from inside. You can’t have the structure without the experience any more than you can have a whirlpool without a center.

The dissolution strategy pursued here invites comparison with several established positions in the philosophy of consciousness. Clarifying these relationships strengthens the case and addresses likely objections.

Illusionism and the reality of consciousness. Frankish (2016) argues that phenomenal consciousness, understood as involving intrinsic, non-functional qualitative properties, is an illusion generated by our introspective mechanisms. RSP shares illusionism’s rejection of "raw qualia" as meaning-independent intrinsic properties. But RSP and illusionism part company on a fundamental point: RSP does not claim that consciousness is illusory. It claims that consciousness is real and constituted by recursive self-modeling. What is illusory, on the RSP account, is only the characterization of consciousness as involving non-functional intrinsic properties. Phenomenal properties exist, but they are functional properties of the recursive architecture, not substrate-intrinsic qualities that float free of organizational structure. In Frankish’s taxonomy, RSP is closest to "strong illusionism," which holds that phenomenal properties are real but functional rather than intrinsic. The difference is that RSP specifies the functional architecture in question: the three-level recursive self-modeling system, not generic functional role.

Access consciousness and phenomenal consciousness. Block (1995) influentially distinguished access consciousness (information poised for use in reasoning, reporting, and behavioral control) from phenomenal consciousness (the "what-it-is-like" character that, Block argues, can overflow cognitive access). RSP rejects this distinction. Under the RSP framework, what Block calls "phenomenal consciousness" that overflows access is a misdescription. All consciousness is constituted by recursive self-modeling, which is itself a form of cognitive access: the system’s own states are modeled, predicted, and made available to action-selection. The "overflow" that Block identifies in empirical paradigms (e.g., Sperling-type experiments where subjects report seeing more than they can report) is better explained within RSP as precision-weighted predictions at Level 2 (schema-organized perceptual states) that have not yet been categorized through Level 3a conceptual resources. These states are structured by schemas and therefore have determinate perceptual character, but they lack the conceptual articulation that would make them reportable in fine-grained detail. This is not phenomenality without access; it is access at one level (schematic organization) without access at another (conceptual categorization).

The explanatory gap in its strongest form. Levine (1983, 2001) formulated the explanatory gap as persisting even for identity theories: even if pain is identical to C-fiber firing, we cannot explain why C-fiber firing should feel painful rather than some other way or no way at all. Levine’s later work (2001) argues that this gap survives even sophisticated functional identity theories, because phenomenal concepts appear to have a non-functional component, a way of grasping their referent that is not exhausted by any functional description. RSP’s response targets exactly this assumption. If phenomenal concepts are themselves predictions generated by the recursive self-model, then what seems like a "non-functional component" of the phenomenal concept is actually the self-model’s first-person access to its own functional states. The concept "pain" as deployed from within the system is the self-model’s prediction about its own nociceptive-affective state. The concept "pain" as described from outside the system is the functional-architectural specification of that same state. These are not two different things requiring a bridge; they are two descriptions of the same recursive self-modeling process, one from the perspective of the system executing it, one from the perspective of the theorist describing it. The gap between them is perspectival, not ontological.

Conceivability and two-dimensional semantics. Chalmers (2010) refines the conceivability argument for dualism using two-dimensional semantics: phenomenal concepts have primary intensions (how they pick out their referent in the actual world) that differ from their secondary intensions (how they pick out referents across possible worlds). This, Chalmers argues, is why zombie worlds are conceivable: the primary intension of "pain" could be satisfied by a state that lacks the phenomenal character pain actually has. RSP’s response: if phenomenal concepts are constituted by recursive self-modeling, as RSP claims, then the primary intension of "pain" IS the self-modeling state that constitutes pain experience. A zombie, lacking the recursive architecture, would lack not just the phenomenology but the very conceptual resources needed to formulate phenomenal concepts. The primary and secondary intensions of "pain" converge on the same recursive self-modeling state, because the concept is not merely about the experience but is part of the self-modeling process that constitutes the experience. Zombie worlds are therefore not conceivable in the relevant sense: the scenario strips away the very architecture that generates the concepts used to describe it.

Ontological commitment: constitutive functionalism. It is worth stating the ontological position explicitly. RSP is a form of constitutive functionalism: phenomenal properties are constituted by, not merely identical to and not merely caused by, specific functional organization. This is closest to Shoemaker’s (2007) realization physicalism, on which mental properties are constituted by the physical properties that realize them, without being reducible to any single physical substrate. The consciousness is in the organizational pattern, the recursive self-modeling architecture, not in the substrate that implements it. This distinguishes RSP from type-identity theory (which ties consciousness to specific neural substrates), from epiphenomenalism (which denies consciousness causal efficacy), and from generic functionalism (which specifies only input-output relations without requiring the specific recursive architecture RSP demands). It also distinguishes RSP from eliminativism: the claim is not that consciousness does not exist, but that what consciousness is has been misdescribed by the qualia-realist tradition.

This social-predictive account integrates perfectly with the three-level architecture:

Level 1: Core Affect

Level 2: Core Schemas

Level 3: Recursive Social Prediction

The feeling doesn’t exist at level 1 alone (core affect is experienced but not self-consciously). It doesn’t exist at level 2 alone (schemas organize but don’t yet feel reflexive). The feeling emerges at level 3 when schemas enable recursive self-modeling in social contexts with conceptual elaboration.

This is why:

The RSP architecture finds independent confirmation from an unexpected quarter: the philosophy of language. Genuine linguistic meaning — not mere symbol manipulation but understanding — requires exactly the same three-level structure that consciousness demands. This convergence deserves attention.

Meaning requires mattering (Level 1). Without something at stake for the meaning-maker, symbols remain pure syntax with no grounded semantics. This is precisely the diagnosis Searle (1980) offered of the Chinese Room: the room manipulates symbols correctly but understands nothing, because nothing is at stake for it. The absence of Level 1 — valenced states that make outcomes matter to the system — is why the room fails to understand. The missing ingredient is not some arbitrary biological property but the affective grounding that gives symbols significance for the system processing them.

Meaning requires stable schemas (Level 2). Without organizational frameworks that persist across contexts, symbols cannot sustain consistent reference. The word "red" means something different in every encounter unless a schema anchors it across uses — connecting the redness of apples, sunsets, and warning signs into a coherent category structured by bodily and spatial experience. This is the insight at the heart of Lakoff and Johnson’s (1999) work on embodied meaning: semantic content is not abstract but grounded in the schemas through which organisms organize their engagement with the world.

Meaning requires the self-referential loop (Level 3b). Without a recursive loop allowing the meaning-maker to know that it means — to meta-represent its own interpretations — there is symbol processing but not understanding. Wittgenstein (1953/2009) argued that meaning requires a "form of life," a situated, practical context in which linguistic activity acquires its point. Under RSP, the form of life is Level 3b: the recursive social-predictive context in which symbols acquire significance because the system models itself as a meaning-maker among other meaning-makers. A system that processes "red" without modeling itself as interpreting "red" does not understand the word; it merely responds to it.

The convergence is evidential. Consciousness and genuine meaning require the same architecture. They are not parallel phenomena — they are co-constituted by the same three-level architecture. That two independent philosophical traditions (philosophy of mind and philosophy of language) converge on the same three-level structure is evidence that the structure is not an artifact of the RSP model but a genuine feature of the phenomenon it describes. The Chinese Room lacks consciousness for exactly the same reason it lacks understanding: it has no Level 1 stakes, no Level 2 schemas, and no Level 3b recursive self-modeling. Searle was right that biology matters — but what matters about biology is not its substrate but the architecture it implements.

Now return to Nagel’s bat. The question "What is it like to be a bat?" assumed:

But under the social-predictive account:

The bat question becomes: "What is the structure of bat self-modeling? How deep is bat recursion? How sophisticated is bat social prediction?" These are tractable empirical questions.

The social-predictive account presented in the previous section makes specific empirical claims about evolution, development, and clinical neuroscience. This section marshals the evidence.

The three-level architecture of consciousness did not appear fully formed. Each level corresponds to a major evolutionary transition, traceable through comparative neuroanatomy, the fossil record, and archaeological evidence. Situating the RSP model within this progression transforms its claims from philosophical assertions into empirically grounded predictions about which species should exhibit which capacities, predictions the comparative evidence confirms.

Valenced states—approach toward the beneficial, withdrawal from the harmful—are among the oldest features of nervous systems. The earliest bilaterian animals (~600 million years ago) possessed neural circuits capable of discriminating beneficial from harmful stimuli. By the Cambrian period (~530 Mya), the arms race between predators and prey had selected for centralized nervous systems with rapid affective appraisal: Is this food or danger?

The critical transition occurred with the emergence of vertebrates (~450 Mya). Early fish developed the subcortical structures—homologs of the amygdala, hypothalamus, periaqueductal gray, and brainstem nuclei—that remain the core affect substrate in all vertebrates today. These structures generate the two dimensions of Level 1: valence (pleasant/unpleasant) and arousal (activated/calm). The Cambridge Declaration on Consciousness (2012) formally recognizes these subcortical systems as homologous across vertebrates, supporting the claim that core affect is phylogenetically ancient and broadly conserved.

The transition to terrestrial life (~350 Mya) enriched Level 1 further. Amphibians and early reptiles faced new homeostatic challenges: thermoregulation, dehydration, gravity. These challenges demanded more complex interoceptive monitoring. Core affect gained richer inputs, not just danger nearby but I am cold, hungry, dehydrated. The affective-homeostatic connection that anchors Level 1 to bodily survival deepened during this period and has remained essentially conserved ever since.

The five core schemas emerged sequentially as mammals evolved increasingly complex body plans, environmental demands, and social structures:

Body schema (~225 Mya): Early mammaliaforms (stem mammals) evolved endothermy, whiskers, and complex musculature requiring sophisticated proprioceptive integration. The body schema—the brain’s dynamic model of the body’s position, boundaries, and capabilities—became essential for the coordinated locomotion demanded by burrowing, climbing, and nocturnal hunting.

Spatial schema (~200 Mya): The mammalian elaboration of the hippocampal formation, particularly its characteristic trisynaptic circuit, enabled flexible cognitive maps supporting path planning, territory management, and mental navigation. Hippocampal homologs exist across all vertebrates, including fish and reptiles. But the mammalian specialization supports allocentric spatial representation that enables mental simulation of routes not yet taken, a capacity that would later support abstract "mental space" for non-spatial reasoning.

Affective-homeostatic schema (~150 Mya): Extended mammalian parental care, particularly lactation and prolonged maternal provisioning (parental care itself is not uniquely mammalian, occurring in crocodilians, birds, and some fish), created new demands on interoceptive regulation. The affective-homeostatic schema integrates bodily needs with motivational states. The mammalian elaboration of ancient neuropeptide systems (oxytocin and vasopressin, whose homologs isotocin and vasotocin exist in fish) for social bonding intensified here. This schema explains why mammalian affect has a distinctly social dimension absent in reptiles: the infant’s distress at separation and the mother’s drive to nurture are affective-homeostatic states organized around social bonds.

Attention schema (~60 Mya): Early primates (emerging in the Paleocene) and other social mammals developed more sophisticated attentional control, and with it the attention schema—the brain’s model of its own attentional states (Graziano, 2013). In social species, attention monitoring became critical for tracking what others attend to (joint attention), laying the groundwork for theory of mind.

Self-schema (~14 Mya in the great ape lineage): Great apes demonstrate mirror self-recognition (Gallup, 1970), indicating a self-schema that integrates body schema, spatial schema, and attention schema into a unified model of the self as a persistent entity distinct from others. The great ape lineage (Hominidae) diverged from other primates approximately 14–16 Mya; orangutans, chimpanzees, bonobos, and gorillas pass the mirror test while most monkeys do not. Mirror self-recognition has also been reported in Asian elephants (Plotnik et al., 2006), Eurasian magpies (Prior et al., 2008), and possibly cleaner wrasse (Kohda et al., 2019), indicating that self-recognition capacities have evolved convergently across distantly related lineages. This complicates the use of MSR as a strict marker for the ~14 Mya dating; the RSP model predicts that these species should show correspondingly limited recursive social prediction depth, which current evidence supports. The self-schema is the architectural bridge between Level 2 and Level 3: without it, the recursive social-predictive loop has no "self" to model.

This sequential emergence makes a testable prediction: in infant development, the schemas should appear in roughly the same order as their evolutionary emergence. Body schema first (present at birth), spatial schema next (reaching and orienting at 3–4 months), affective-homeostatic regulation (6–9 months), attention schema (joint attention at 9–12 months), and self-schema (mirror self-recognition at 18–24 months). The developmental evidence confirms this sequence (Amsterdam, 1972; Meltzoff, 2007; Rochat, 2003).

Level 3—recursive social prediction—emerged uniquely in the hominin lineage, driven by escalating social complexity. Dunbar’s (1998) Social Brain Hypothesis provides the quantitative framework: across primates, neocortical volume correlates not with ecological variables (diet, territory size) but with social group size. The demands of tracking alliances, rivalries, debts, reputations, and deceptions across increasingly large groups selected for neural architectures capable of recursive modeling: If I share with her, he will see, and he might retaliate because he thinks I’m favoring her over him.

The archaeological record allows us to trace this development with surprising specificity:

7–3 Mya (early hominins, groups of 20–50): Sahelanthropus, Ardipithecus, and early Australopithecines lived in small groups in mixed woodland-savanna environments. Cranial capacities of 350–450cc supported Level 2 schemas and basic social cognition: recognizing individuals, remembering alliances, tracking simple hierarchies. Level 3 was nascent at best.

3.3–2 Mya (Lomekwian and Oldowan tools): The earliest known stone tools (Lomekwi 3, Kenya, ~3.3 Mya) and the more widespread Oldowan industry (~2.6 Mya) of Homo habilis (brain ~600–750cc) required planned flaking sequences and, critically, teaching — or at minimum, sustained social observation. Active teaching requires modeling the learner’s state of knowledge: "They don’t yet know how to strike at this angle." This is second-order social prediction—Level 3a (conceptual categorization applied to other minds) emerging under selection pressure.

1.8 Mya–800 Kya (Homo erectus, groups of 80–100): Homo erectus represents a cognitive leap: brain sizes of 800–1100cc, Acheulean hand axes requiring extended multi-step production sequences (chaînes opératoires), evidence of controlled fire use (Wonderwerk Cave, South Africa), and migration out of Africa. Tracking alliances, debts, and deceptions across groups of 80–100 individuals demands recursive modeling. The strange loop was beginning to operate in earnest.

800–300 Kya (archaic humans, groups of 100–130): Homo heidelbergensis and early Homo sapiens (brain 1100–1400cc) developed cooperative big-game hunting requiring real-time social coordination. The Schöningen spears (Germany, ~300 Kya)—carefully crafted throwing spears—imply planned group hunts in which each participant must predict what their hunting partners will do, predict that their partners predict what they will do, and coordinate accordingly. This is full recursive social prediction operating under lethal pressure, where failure of the strange loop means death.

300–70 Kya (Homo sapiens, behavioral modernity): Anatomically modern humans emerged in Africa with brain sizes of 1200–1500cc and, critically, reorganized prefrontal and temporal cortices. Symbolic behavior appeared: ochre pigments at Blombos Cave (~100 Kya), shell beads at Skhul Cave (~100 Kya), geometric engravings. Symbols require shared meaning—the ability to represent something to someone, knowing they will understand it as you intend. This is Level 3a (conceptual categorization) reaching its full potential: meaning is now publicly shared and recursively interpreted.

The behavioral revolution of the Upper Paleolithic (~70–40 Kya), with its blade technologies, composite tools, personal ornaments, long-distance trade networks, and the earliest cave art (Chauvet, ~36 Kya; Lascaux, ~17 Kya), required cumulative culture: knowledge transmitted across generations through teaching, imitation, and symbolic communication. Cumulative culture demands full Level 3: you must model what others know, predict what they need to learn, and represent your own knowledge in transmissible form.

Perhaps the most striking evidence for the RSP model comes from what happened when human societies exceeded the ~150-person limit of direct recursive social prediction (Dunbar’s number). Rather than evolving larger brains, humans invented cultural technologies that extended Level 3 beyond its biological substrate:

Role-based prediction: In agricultural villages (beginning ~12 Kya in the Fertile Crescent), individuals could no longer maintain recursive models of every community member. The solution was categorical social prediction: instead of modeling "Ugg the specific person," you model "the chief," "the healer," "the stranger," social roles with predictable behaviors.

Externalized social memory: Writing (Sumer, ~3200 BCE) externalized social prediction into durable media. Law codes (Ur-Nammu, ~2100 BCE; Hammurabi, ~1750 BCE) codified social predictions as explicit rules: "If you do X, consequence Y will follow." Markets and currency created abstract social prediction: modeling the behavior of strangers through standardized exchange.

Institutional prediction: Cities and civilizations developed increasingly sophisticated cultural institutions (legal systems, religions, bureaucracies, democratic assemblies), each of which functions as a social prediction technology, allowing individuals to coordinate behavior with thousands or millions of people they will never meet.

This sequence reveals something fundamental about the RSP architecture: it has limits. The biological substrate of Level 3 maxes out at roughly 150 recursive social models. When social complexity exceeded this limit, humans did not evolve new neural architecture. They built cultural scaffolding for the existing architecture. The fact that every complex society has independently invented institutions, codified laws, and symbolic systems for extending social prediction beyond face-to-face interaction strongly suggests that the RSP architecture is real, that it has discoverable constraints, and that it shapes the structure of human civilization itself.

The mammalian-centric narrative above requires an important caveat. Corvids (crows, jays, ravens) show cognitive sophistication rivaling great apes: tool manufacture in New Caledonian crows, future planning in Western scrub-jays, episodic-like memory, and possible theory of mind (Emery & Clayton, 2004). Yet they live in relatively small social groups. This suggests that ecological demands (extractive foraging, tool use) can independently drive cognitive sophistication, as the ecological intelligence hypothesis proposes (DeCasien et al., 2017, found that frugivory is a stronger predictor of primate brain size than social group size once phylogeny is controlled).

Cephalopods present an even sharper test case. Octopuses possess approximately 500 million neurons, comparable to a dog, organized in a radically different architecture: two-thirds of their neurons reside in the arms rather than a centralized brain (Mather, 2008; Fiorito & Scotto, 1992). They demonstrate sophisticated problem-solving (opening jars, navigating mazes, using coconut shells as tools), rapid chromatophore-driven camouflage requiring real-time body-environment modeling, and observational learning. Yet octopuses are largely solitary, with minimal social interaction beyond mating. Under the RSP model, this predicts high Level 1 capacity (valenced states driving approach/avoidance) and rich Level 2 schemas (an exceptionally complex body schema for coordinating eight semi-autonomous arms, spatial schema for den navigation and hunting) without Level 3 recursive social prediction. The available evidence supports exactly this: octopuses show behavioral flexibility and environmental intelligence rivaling social mammals, yet no evidence of mirror self-recognition, theory of mind, or recursive self-modeling. They are, in RSP terms, a system with high (Levels 1–2 intelligence) but negligible

(recursive depth) — a prediction formalized in Appendix H (§H.5, Prediction H.4).

The RSP model’s strongest claim is therefore not that all cognitive evolution tracks social complexity, but that the specifically recursive, self-referential character of human consciousness—the strange loop—was shaped by social demands. Corvids may have evolved sophisticated Level 2 schemas and proto-Level 3 capacities through ecological rather than social pressures, which is consistent with the RSP architecture (ecological problems also require predictive modeling) but challenges the exclusively social selection narrative. Similarly, cooperative hunting in wolves, orcas, and lions achieves behavioral coordination through simpler mechanisms (role-based heuristics, shared attention to prey) without necessarily requiring full recursive mental state attribution.

The RSP model predicts that consciousness should have evolved in precisely those lineages where social complexity demanded recursive self-modeling, should have elaborated in proportion to social group size, and should have produced cultural extensions when biological capacity reached its limits. The paleoanthropological record confirms all three predictions:

No rival theory of consciousness generates this pattern of predictions. Integrated Information Theory (IIT) would predict consciousness proportional to neural complexity (Φ) regardless of social demands. Global Workspace Theory would predict consciousness proportional to information broadcasting capacity regardless of social structure. Only the RSP model predicts that the evolution of consciousness should track social complexity specifically, a prediction the comparative and archaeological evidence consistently supports.

The human brain comprises approximately eighty-six billion neurons, each connecting to thousands of others through synapses to create between one hundred trillion and five hundred trillion total synaptic connections. Parallel processing across these networks produces computational throughput on the order of 10^16 operations per second. The essential point for the RSP model is that none of this computational activity is itself conscious. Consciousness is not an observation of neural processing; it is what it is like, from the inside, to be a brain whose computations achieve the specific integrative pattern described by the three-level architecture.

This substrate-level reality supports the meaningful integration framework directly. By the time any content reaches consciousness, it has already been extensively processed, categorized, and integrated across all three levels: there is no stage at which "raw, pre-conceptual phenomenal qualities" exist to be subsequently interpreted. The explanatory gap appears unbridgeable only when this intermediate processing is overlooked; once recognized, the question shifts from how physical events transform into subjective qualities to what organizational patterns of computation constitute experience from the first-person perspective. The RSP architecture identifies that organizational pattern: what reaches awareness is specifically what has been integrated through core affect (Level 1), organized through schemas (Level 2), categorized through concepts, and woven into the recursive self-model (Level 3). The brain’s computational power is necessary but not sufficient; what matters is how that computation is organized through the three-level architecture.

The three-level RSP architecture maps onto a transition well understood in information theory: from raw data, through structured information, to self-referential computation. Level 1 core affect functions as continuous, undifferentiated signal, analogous to unprocessed sensor readings. Level 2 schemas impose categorical structure on that signal, transforming it into relationally organized representations. Level 3 introduces self-reference: the prediction-error loop (predict future self-state, compare to actual, update model) is structurally identical to predictive coding, and the recursive quality — a system modeling itself modeling itself — is what emerges when computation takes itself as input.

This parallel matters philosophically because the transition from data to structured information to computation generates no conceptual mystery in information theory. The puzzle arises only at the self-referential step. If consciousness follows this same architectural pattern, then the hard problem is not about an ineffable extra ingredient but about what happens when a sufficiently complex information-processing system becomes recursively self-modeling. The explanatory gap falls not between physics and phenomenology but between computation and self-referential computation — a gap that is substantive but not metaphysical.

One crucial disanalogy strengthens the argument. Unlike genuinely neutral binary data, Level 1 core affect is already minimally meaningful: already valenced, already carrying survival relevance. Consciousness therefore emerges not from meaningless substrate but from something already minimally meaningful being progressively structured (Level 2) and then recursively modeled (Level 3). This is why the RSP model avoids both the hard problem (how does consciousness emerge from something utterly non-conscious?) and the combination problem facing panpsychism (how do micro-experiences combine into macro-experience?). Core affect is the biological ground floor — already valenced, already meaningful in the minimal sense that matters for survival — upon which schemas and recursive modeling build the full architecture of phenomenal experience.

This social-predictive account explains developmental patterns:

0-18 months: Infants have core affect organized through developing schemas (body, spatial) but lack sophisticated self-models. They have experience but not yet self-conscious experience. They cannot yet model themselves as others see them.

18-24 months: Mirror self-recognition emerges. Infants recognize themselves in mirrors, indicating a developing self-model. They begin to understand themselves as entities that can be perceived by others.

4-5 years: Explicit theory of mind develops. Children pass false-belief tasks, demonstrating they understand that others can have different knowledge and beliefs (Wellman et al., 2001; implicit precursors appear earlier, around 15 months). Crucially, this requires both modeling others as having mental states AND modeling oneself as having mental states that differ from others’. The recursive loop begins operating: "I know X, but Mom doesn’t know X, so if I want her to know X, I must tell her."

4-7 years: Autobiographical memory develops. Children construct narratives about their past selves, creating continuity. The self-model extends across time. They can predict their future mental states and remember past mental states as belonging to the same "I."

7+ years: Sophistication increases. Children become better at taking others’ perspectives, understanding complex social dynamics, predicting social consequences. The recursive loops deepen: "They think that I think that they think…"

As these capacities develop, consciousness becomes richer. The child develops a stronger sense of self as continuous entity, becomes able to think about their own thinking, to reflect on experience, to imagine how others see them. The strange loop elaborates and the self-model integrates with increasingly sophisticated social predictions.

Why this ordering is mathematically necessary. The formal appendixes show that the Level 1 → 2 → 3 developmental sequence is not merely an empirical observation but an architectural constraint of the RSP framework. The free energy formulation (Appendix B) requires to be functional before

can minimize, because Level 2 prediction errors

depend on stable Level 1 expectations

. The reinforcement learning formulation (Appendix C) requires a reward signal (valence, Level 1) before state representations (schemas, Level 2) can be learned through unsupervised exploration — play is the infant’s exploration policy, driven by intrinsic reward. The control-theoretic formulation (Appendix D) requires inner control loops to be stable before outer loops can be commissioned — the cascade design principle

implies a construction order matching the developmental sequence. In all three formalisms, the architecture cannot be assembled top-down or simultaneously; it must be built from the bottom up, exactly as observed in human development.

The evolutionary arc described above explains the gradations of consciousness we observe across phylogeny. Each species’ consciousness reflects the level of the RSP architecture its lineage has reached, and critically, the social complexity that drove its development:

Simple vertebrates (fish, amphibians, reptiles): These animals possess Level 1 core affect—the phylogenetically ancient subcortical systems generating valence and arousal (~450 Mya heritage)—and rudimentary Level 2 schemas (body, spatial). Their experience is valenced and organized but not recursive. A fish navigating a reef has affective responses to threats and rewards organized through body and spatial schemas, but does not model itself as an entity with a persistent identity.

Non-social mammals (cats, bears, hedgehogs): These species have richer Level 2 schemas including proprioceptive body schema, spatial schema (hippocampal cognitive maps), and affective-homeostatic schema (the mammalian innovations of ~250–150 Mya). They can predict their own immediate states. A cat predicts its own hunger, fatigue, and interest in prey, enabling phenomenology richer than mere affective response. Even solitary mammals retain the neural architecture for self-modeling that originally evolved for social purposes, since their ancestors were social.

Social mammals (elephants, dolphins, wolves, some primates): These animals have sophisticated attention schemas and rudimentary self-schemas, enabling recognition of self in mirrors (in some species), longer-term memory integration, and basic social prediction. Elephant herds, dolphin pods, and wolf packs require modeling other individuals’ likely behavior—a proto-Level 3 capacity. But the recursive depth is limited: they predict what others will do, yet show limited evidence of predicting what others think about them.

Great apes (chimpanzees, bonobos, orangutans, gorillas): Great apes possess full self-schemas (mirror self-recognition, emerging in the great ape lineage ~14 Mya) and theory-of-mind precursors. They can model themselves from others’ perspectives to some degree and engage in tactical deception (Byrne & Whiten, 1988). Their social groups of 30–60 individuals demand recursive social prediction, but their Level 3 remains limited by the absence of linguistic concepts and cumulative culture. A chimpanzee can model "he thinks I’m submissive," but cannot model "he thinks I think he thinks I’m submissive."

Humans: Full recursive self-modeling with linguistic elaboration, operating within social groups that have exceeded Dunbar’s number through cultural extensions. We can model ourselves modeling others modeling us, ad infinitum. We can construct elaborate narratives about our past and future selves. We can use culturally learned concepts to create fine-grained experiential distinctions unavailable to any other species. The richness of human consciousness is not a product of brain size alone. It is the product of the recursive social prediction architecture calibrated through years of social interaction, elaborated through language, and extended through cultural institutions.

The richness of consciousness thus correlates with: (1) sophistication of core schemas, (2) depth of recursive self-modeling, (3) complexity of social environment and demands, and (4) availability of cultural concepts for elaboration. This is not merely a descriptive taxonomy. It is a set of predictions that the comparative evidence confirms.

The strongest empirical support for Level 3’s social-predictive architecture comes from tragic natural experiments: cases where human biology is intact but social interaction is absent or severely curtailed during development. If the RSP model is correct, these individuals should show preserved Levels 1-2 (core affect and basic schemas) with selectively impoverished Level 3 (recursive self-modeling, theory of mind, emotional granularity). This is precisely what we observe.